‘Broken’: Mum’s ‘worst nightmare’ revealed

A mother grieving for her 15-year-old “brave little girl” who died by suicide has shared how her daughter felt “broken” by the bullying she faced on social media, a parliamentary inquiry has been told.

For its second public hearing on Friday, the probe continued to explore the “influence and impacts of social media on Australian society” by inviting representatives from Meta, Snap Inc, TikTok Australia and Google to give insight into the changing landscape.

The committee is working to learn how the decision of Meta to abandon deals under the News Media Bargaining Code will impact Australian media.

Snapchat’s plan to keep kids safe online

The inquiry was told about Matilda “Tilly” Rosewarne, who died by suicide after being bullied on social media, including Snapchat, in 2022.

Tilly’s mother, Emma Mason, wrote in her submission to the inquiry about the harm her “brave little girl” had faced in the lead-up to her death through bullying on social media platforms like Snapchat.

The inquiry was told Tilly’s privacy had been violated after a student at her school circulated on Snapchat of photo of a body with the head cut from the frame, claiming it was the teenager among her classmates.

“This was her 12th attempt to end her life. She was just 15 years old,” Ms Mason wrote in her submission.

“She was exhausted, tired and broken.”

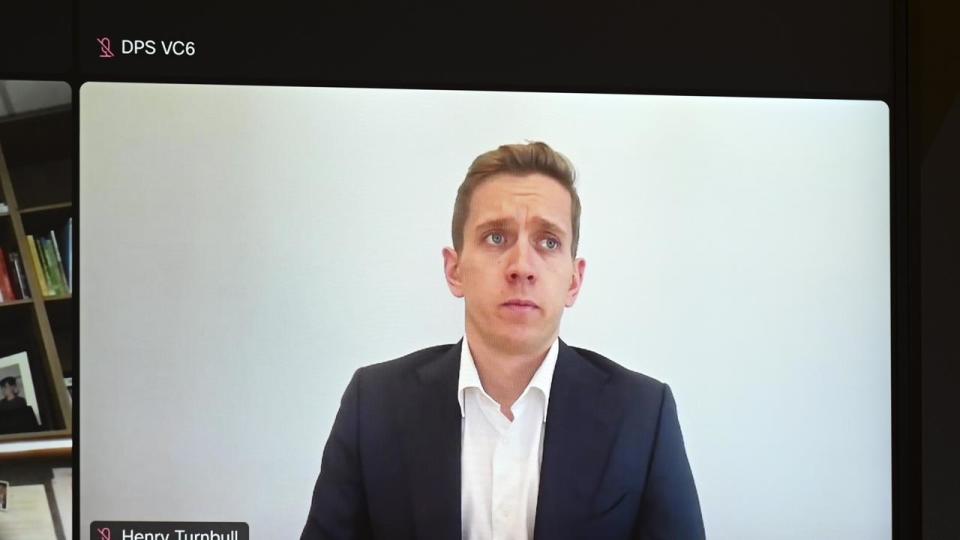

Snap Inc head of public policy Henry Turnbull told the inquiry that Snapchat, which is operated by Snap Inc, worked hard to provide users every opportunity to feel safe on its platform.

Mr Turnbull encouraged users to block and report accounts they felt were being harmful towards others.

“I think there’s a misconception on platforms like Snapchat that if you report somebody, they will know,” he said.

“That is not true. Within 10 minutes, we respond to reports.

“Snapchat launched new resources for people dealing with bullying with Australian specific content like Reach Out.

Liberal MP Andrew Wallace, who shared the story with the inquiry on Friday, said Tilly’s death was a “parent’s worst nightmare”.

Mr Wallace said her story was “just one story of hundreds of stories” he heard from the community about the correlation between social media and teens who died by suicide.

Mr Turnbull said Snapchat was always working to continue to improve its systems to ensure people feel safe online.

“This work is never done, bullying is unfortunately something that takes place in the real world and online,” he said.

“We do work hard to address it and I recognise how damaging it can be and how devastating it can be to those people affected.

“From our perspective, it’s about focusing on the actions that we’re taking to address these risks.”

Meanwhile, Google government affairs and public policy Australia and New Zealand director Lucinda Longcroft said the platform also held the safety of its users to the highest standard.

“We are certainly open to exploring any avenue of ensuring the safety of Australian users,” she said.

“We never feel we are doing enough to exercise our responsibility.

“We are constantly working because of the safety of children as the most vulnerable among our users, but the safety of all our users is of utmost concern and our responsibility.

“We invest time, resources, and people’s expertise in ensuring that our systems and our services or products are safe in the area of mental health and suicide.”

The inquiry was also told Google had 80 deals with 200 Australian news outlets under the News Media Bargaining Code.

TikTok’s place in Australian social media landscape

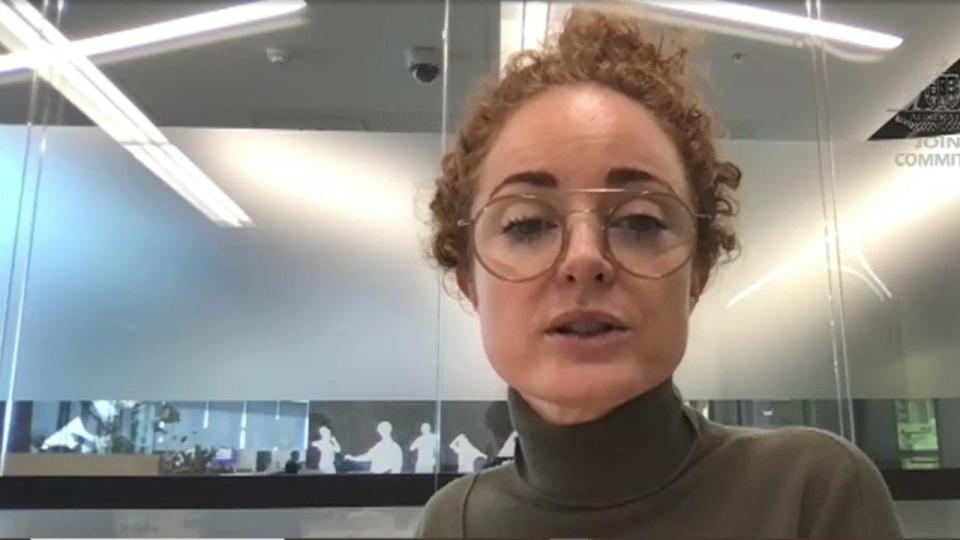

TikTok Australia public policy director Ella Woods-Joyce said the government’s media bargaining code might not apply to their platform due to its usership.

Ms Woods-Joyce told the committee that less than half of 1 per cent of news seen on TikTok comes from credible news publishers.

She said she wasn’t able to “speculate or provide insight” into how the new code would impact TikTok.

“We’re not the place for news,” she said.

“We’re firmly of the view that we’re not a go-to destination for editorial news, that our users are coming for entertainment and lifestyle and other content areas.”

Ms Woods-Joyce confirmed TikTok had not had any conversations about the platform exiting the Australian market.

The social media site had also removed 1.2 million videos before they were publicly viewed in Australia because they’d breached guidelines, Ms Woods-Joyce said.

Meta moves away from news

Meta regional director of policy in Australia, Japan, Korea, New Zealand and Pacific Islands, Mia Garlick, said Facebook users accessing the Facebook News portal had dropped by 80 per cent.

Ms Garlick said while Meta wasn’t trying to prevent users from accessing news on Facebook, the business found only 3 per cent of users were accessing news from its platforms after it changed how public content was consumed.

“There’s a large number of channels that people can get news content from,” she told the inquiry.

Ms Garlick said there’d been a “massive shift” to short form video by the “vast majority” of its users.

“We made a change to reduce the amount of public content on our services, which included news content, and then it dropped down to 3 per cent,” she said.

When pressed on who was responsible for deciding to remove Facebook News, Ms Garlick said no one person was in charge of those decisions.

“If it was one person who was responsible for all those things, I think that would be a concern,” she said.

“What we have tried to do is establish systems and processes that have a range of different inputs and that can be tested and can be held accountable.”

Safety protocols for teens and children

Meta vice-president and global head of safety Antigone Davis told the committee that she didn’t think social media had harmed children.

“I think that social media has provided tremendous benefits,” Ms Davis said.

“I think that issues of teen mental health are complex and multifactorial. I think that it is our responsibility as a company to ensure that teens can take advantage of those benefits of social media in a safe and positive environment.”

During her evidence, Liberal MP Andrew Wallace told Ms Davis “you cannot be serious”.

“You cannot be taken seriously Ms Davis when you say that,” he said.

Ms Davis said Meta was “committed to trying to provide a safe and positive experience” for all users, especially teenagers.

“For example, if a teen is struggling with an eating disorder, and they’re on our platform, we want to try to put in place safeguards to ensure that they have a positive experience and that we are (not) contributing or exacerbating that situation that (they) may be dealing with,” she said.

Senator Sarah Henderson told the inquiry she’d recently seen a video on Instagram live stream that depicted the “most horrendous bashing of the school student by another student”.

Senator Henderson asked Meta what type of “mechanisms” were in place to stop these videos from being live streamed.

“Because the bottom line is you are not stopping this and there are multiple cases where it demonstrates your failure to stop this sort of conduct online,” she said.

Ms Davis said Meta took videos like the one Senator Henderson saw “very seriously”.

“We have policies against that kind of content,” she said.

“We build classifiers to try to identify that content, proactively.”

Tackling scams online

Deputy chair senator Sarah Hanson-Young also questioned Meta’s advertising business model and whether it profited off scams and “lies”.

Ms Garlick said Meta was not making money from scams and dishonest posts.

“We have policies and systems and tools to do everything we can to prevent those ads and also look at in terms of what types of strategic network disruptions that we can do to prevent those from being on our services,” Ms Garlick said.

But Senator Hanson-Young refuted her claim, stating she believed it was a “dishonest answer”.

“You’re an advertiser, you’re an advertising business. You take money from people to run ads and some of those ads are telling lies,” she said.

“They’re getting to them. They’re using your technology, they’re using your platform.”

Committee chair Kate Thwaites said the inquiry aimed to learn how social media was impacting society.

“Australians are concerned about the impact social media is having across many areas of our community,” Ms Thwaites said on June 20 ahead of the first public hearing.

“The committee will be hearing about how social media companies operate in Australia, the impact that has, and considering what changes we need to see”.

The committee is expected to table its interim report on or before August 15, with the final report due on or before November 18.

1800RESPECT national helpline: 1800 737 732

Sexual Assault Crisis Line (VIC): 1800 806 292

https://www.sacl.com.au/

Lifeline (24-hour crisis line): 131 114

Beyond Blue: 1300 22 4636 or beyondblue.org.au

Beyond Blue’s coronavirus support service: 1800 512 348 or coronavirus.beyondblue.org.au

Kids Helpline: 1800 55 1800 or kidshelpline.com.au

Headspace: 1800 650 890 or headspace.org.au